Dispatch system for autonomous shuttles

A cross-platform application for remote autonomous shuttle management

Services

UX/UI research and design

Cross-platform app development

Product management

Technology

Google Maps

Streamlining autonomous transportation management

Autonomous transportation is nothing new: self-driving cars, buses, and shuttles are becoming an increasingly common sight in big modern cities. But vehicle automation is yet to reach its full potential. There are 5 levels of automation, where the fifth requires zero human involvement. Current self-driving vehicles only operate on level 4, which still requires a person to monitor the process and be ready to take over at any moment.

In fleet transportation, dispatchers, or operators, monitor the overall state of the vehicle, receive data from lidars, radars, internal and external cameras, and other sensors, track the vehicle’s location on the map, its charge levels, tire pressure, speed, and so on. Because of the amount of information that needs to be monitored, it is usually done with multiple different screens or one massive display. At least, that’s the common idea.

Bamboo Apps decided to challenge it. The team set out to design a mobile-first solution that would combine all the complex functionality of an autonomous shuttle dispatcher application into a compact, precise, informative, and easy-to-navigate interface.

A feature-rich dispatch system for tablets

The team of Bamboo Apps created Dispatcher, a Flutter-based PoC application for 10.2 inch tablets. The software allows users to easily track the location and state of autonomous shuttles, create new routes, stop the vehicles and take them over in case of emergency, contact passengers, and receive alerts in the form of notifications.

Being developed on a cross-platform framework, the software is suitable for both Android and iOS devices. This mobile format drastically lessens the amount of space and resources needed to implement a dispatch system for autonomous public transport.

Pushing the envelope of autonomous vehicle control

The software harbours all the features necessary for the stable management of autonomous shuttles.

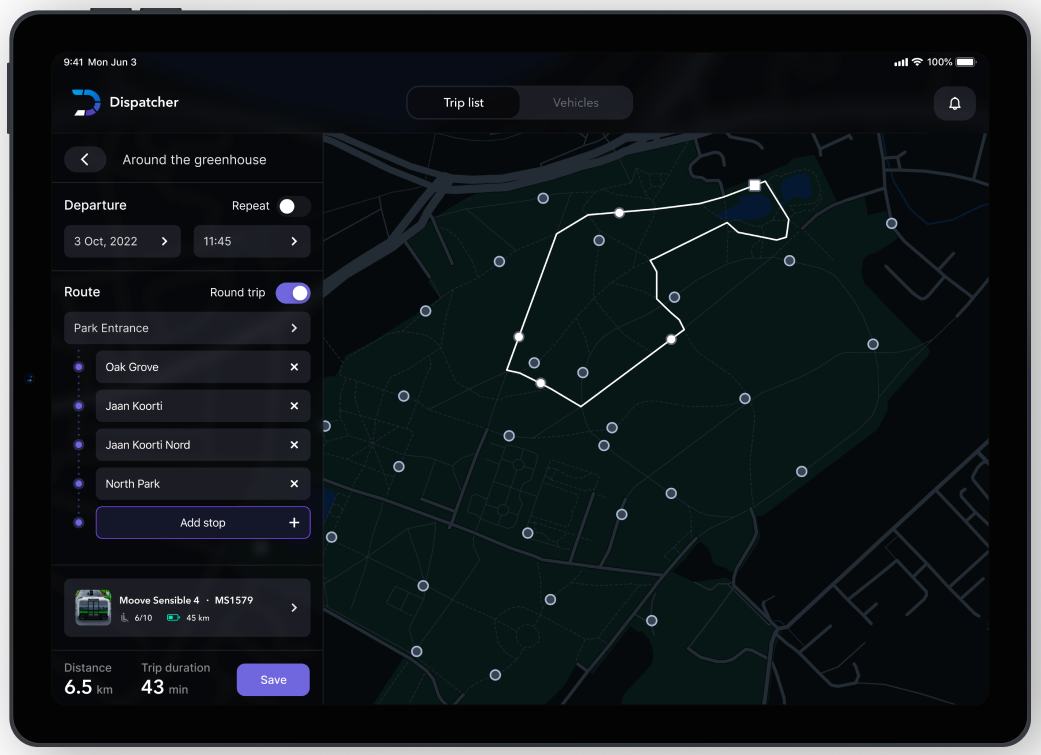

Creating a new route

Dispatchers can create new routes by setting several parameters. First, they set the date and time for when the trip begins. Second, they choose the stops the shuttle will pass through – this can be done by manually entering destination names or by selecting those points on the map. Finally, the route can be set to loop.

After the parameters have been entered, the app forecasts the approximate time of arrival to each destination, as well as how long the entire trip (or a single loop) will take. The user will also see the route’s total distance and the battery percentage needed to complete it. They can then assign a specific unit to the route, depending on its passenger capacity and level of charge.

Ongoing trips

The interface allows the dispatcher to see all the ongoing trips in real-time on a large map. The dashboard shows how many passengers are currently on board, the battery level of the shuttle and how long that charge will last, the speed, and the estimated time of arrival to its next destination. The progress bar also conveniently displays which point of the trip the shuttle is at.

The trip information panel lets the dispatch operator monitor the state of the vehicle in real time. Aside from radar, lidar, and numerous other sensors, each shuttle is equipped with 6 cameras (3 external and 3 internal) broadcasting live video to the dispatcher. They can be used to have a video call with the passengers in case of emergency.

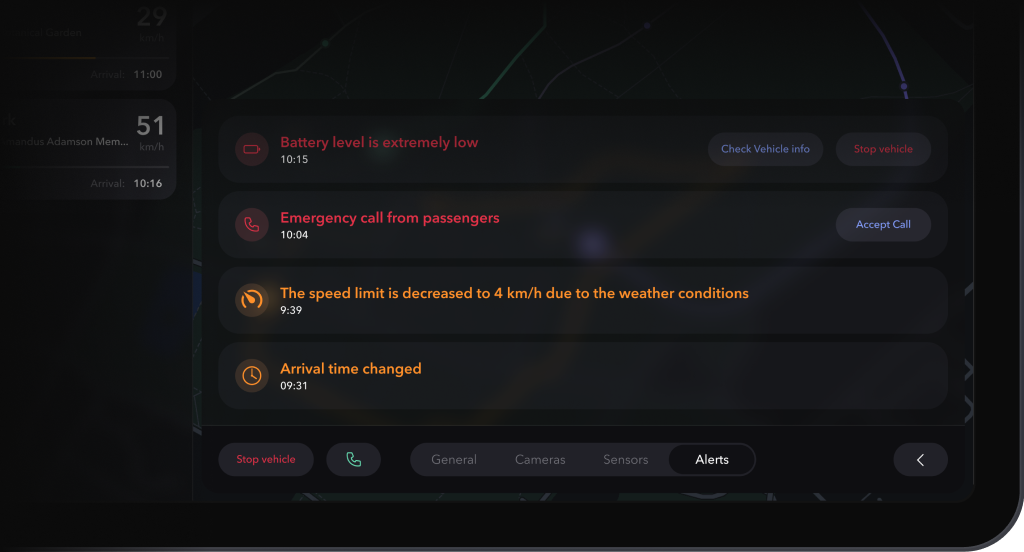

Notification bar

All emergencies are communicated to the dispatcher via instant notifications. An alert is issued in case of a slippery road, a serious obstacle, a passenger falling, a passenger clicking the emergency stop button, low battery level, low tire pressure, and other mishaps. These can be viewed and addressed in the notifications bar.

For example, when a passenger presses the emergency stop button, the dispatcher gets a notification. They are then given the option to call the passengers using the shuttle’s internal cameras. After the call, the dispatcher clicks “Resume motion” to start the shuttle again and returns to the dashboard.

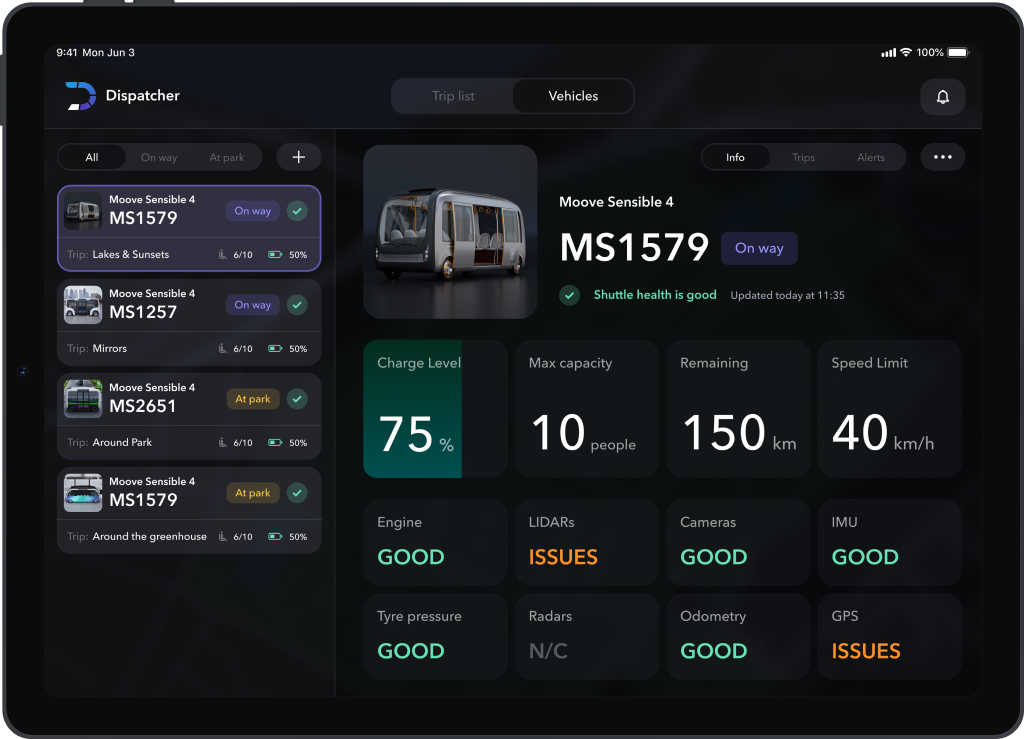

Detailed vehicle information

The dispatcher can view the list of all the shuttles on the road and stationed in the park. The information includes the date of the shuttle’s last trip, its passenger capacity, kilometres travelled, the speed limit, and brief data on the state of all the sensors.

There is also a general health status determined by the sum of all the above mentioned parameters.

Creating the ultimate design

Dispatcher was developed by a team of 6: a product manager, a project manager, a tech lead, a designer, and 2 developers. It was built with the multiplatform framework Flutter with 10,2 inch tablet screens in mind. For this project’s purposes, Flutter had to be integrated with Google Maps and a notifications service. The app was also given the ability to function offline via the integration with GPS services.

Software like this is typically developed for multiple monitors, which is understandable, considering the volume of information an autonomous vehicle operator needs to process. However, the team wanted to test how usable a complex dispatch system like this would be on a single tablet screen.

Constructing the UI

The UI had to maintain usability and readability while staying informative and preventing misclicks. That’s especially crucial when it comes to software that deals with autonomous transport for human passengers: the inability of the dispatcher to react quickly and appropriately to a critical situation will have dire consequences.

This is why the team conducted rigorous research and testing to ensure that the design of the interface met all the requirements in terms of precision and on-screen information volume.

Setting up a mock server

Another challenge the team had to face during the early stages of development was the lack of an actual shuttle park to test the dispatcher system with. The solution to that came in the form of a mock server.

It hosted all the “shuttle” data necessary to check the application’s performance and functionality: live video feed, sensor information, alerts, and so on.

Synchronising the video feed

Synchronising video streams between different components was not an easy task. There should be zero loading time when the user navigates between different application screens broadcasting the same feed – it shouldn’t need to reconnect, according to the app requirements. But that’s quite tricky to achieve.

The problem is, every component loads the stream individually by default. So whenever a user goes to a new screen, the video feed needs a couple of seconds to restore connection.

That can not only be detrimental to user experience, but also dangerous in certain emergency situations.

The team solved the problem by creating independent video controllers. These operated separately from the application screens displayed to the user. So when the user went to a new screen, the controller from the previous one was instantly transferred to the new app component. As a result, the playback lag has been completely eliminated.

Public debut

The project has successfully completed its development. It will make its public debut at the GITEX 2022 exhibition in Dubai from October 10th to 14th.