Introduction

Modern shared mobility platforms go far beyond mobile apps for services like car sharing, bike sharing, or scooter sharing. They form a comprehensive intelligent digital ecosystem that, beyond the user-facing app, typically includes:

- operator admin dashboards with fleet management, geofencing, pricing, user management, and analytics;

- backend infrastructure and integrations (payments, identity verification, IoT modules, reporting);

- supporting and external services like CRM, monitoring platforms, and business intelligence tools.

Today’s large shared mobility and ride-hailing operators use custom platforms, which give full control over architecture, user experience, and data. Scaling these platforms remains a challenge, but the long-term benefits make them a strong choice compared with off-the-shelf SaaS solutions, which mainly serve smaller local players.

With all that in mind, in this article, we’ll focus primarily on custom development and the architectural, UX, and operational decisions to scale platforms – from fleet expansion to regional rollout.

For greater depth of coverage, we’ve divided the article into two parts. In Part 1, we’ll concentrate on architectural and engineering challenges. In Part 2, we’ll turn to UX design and operational management.

Architecting shared mobility software for scale

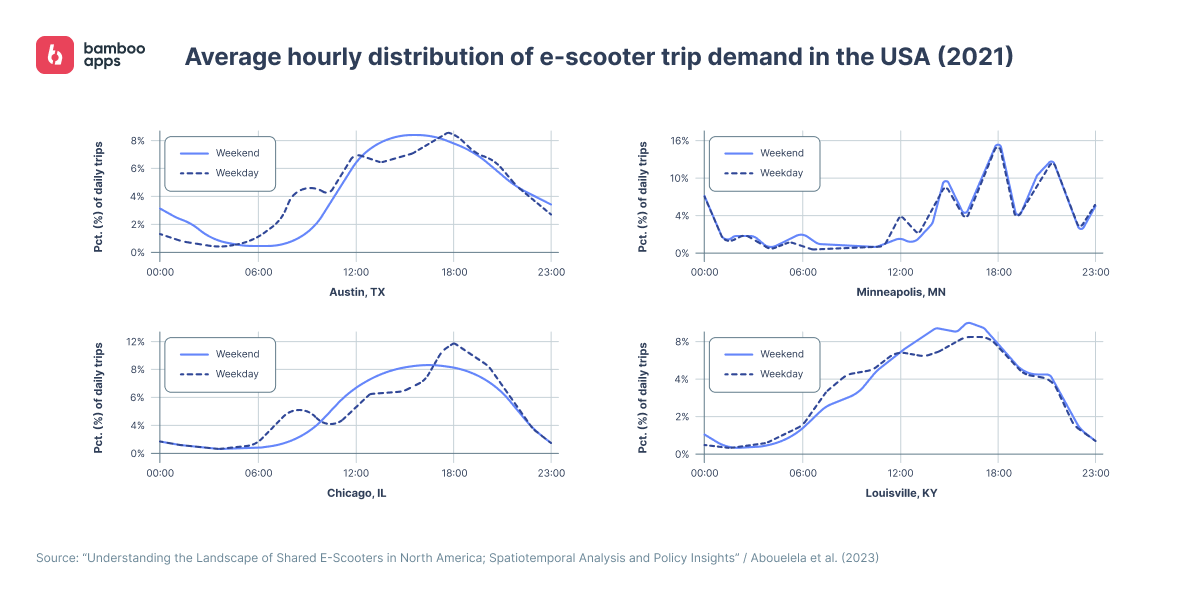

It may seem that architectural scaling challenges are mostly about handling more users or vehicles. In practice, however, the real strain often comes from user behaviour patterns and the simultaneous operations they generate.

Maxim Leykin, PhD, Chief Technology Officer at Bamboo Apps:

“For example, if a shared mobility company operates two large fleets – one in the U.S. and one in a major urban area in India – the number of simultaneous operations likely won’t hit critical thresholds due to the time zone difference between the two regions.”

But things change dramatically when usage spikes coincide in the same or neighbouring high-density regions. For example, a Friday evening surge on the U.S. East Coast can strain the system far more than expanding operations into new Asian markets. Sudden traffic surges, overlapping ride requests, and live data from tens of thousands of vehicles can overwhelm the system within minutes. Even infrastructure that has consistently supported fleet growth can quickly hit its breaking point.

Typical symptoms in such scenarios include:

- delays in real-time data processing of GPS and telemetry from vehicles;

- booking system failures, especially when handling multiple time zones and user groups simultaneously;

- pricing logic issues, along with disruptions in availability checks and IoT communication.

It’s exactly explosive, not linear, load growth that tends to overwhelm traditional monolithic systems, and there’s no silver bullet for that. That’s why, even without immediate scaling plans, any smart mobility platform should be flexible by design and ready to handle key risks from day one.

Given this, reasonable approaches include:

- an event-driven distributed backend architecture to reduce interdependencies between components;

- caching high-frequency data to ease the load on core services;

- using microservices to scale critical modules such as booking or billing independently.

We’ll explore these practices in greater detail later. In the meantime, Maxim Leykin, CTO at Bamboo Apps and our subject-matter expert, clarifies that a properly designed and implemented system for peak loads should perform at least the following functions:

- distribute load across resource groups using load-balancing tools;

- automatically provide additional resources when needed;

- defer some non-critical real-time operations such as analytics, complex business logic, or visualisation;

- predict excessive load (using statistical analysis and machine learning tools) and alert staff in advance;

- account for security context and detect intentionally created overloads, taking countermeasures against them.

Selective microservices vs. complete architecture migration

Microservices remain a popular trend, and some companies may choose to migrate their systems to them all at once, rather than introducing individual modules or functions.

This approach remains controversial, and we won’t advocate for or against it. However, when evaluating whether it makes sense, Maxim recommends first asking: what specific problems is the team trying to solve with microservices at this stage?

In most cases, there’s a less costly and less painful alternative to replacing the entire architecture.

Maxim Leykin, PhD, Chief Technology Officer at Bamboo Apps:

“Suppose we’re still planning to move toward microservices. It’s important to understand that all the potential benefits of a microservice architecture can easily be lost if we don’t pay enough attention to domain boundaries, service communication, and proper functional decomposition. In that case, we risk ending up with a ‘distributed monolith’ – a tightly coupled system that’s a total nightmare to maintain and support. This is no less relevant for shared mobility technology.”

Nevertheless, it’s important to emphasise that a complete migration is a high-risk, high-stakes decision that won’t suit every company. It demands team maturity, substantial resources, and a cautious approach.

Solving some architectural challenges: real-world cases and research

Bolt: scaling transactional systems with distributed SQL

The Bolt case illustrates a successful shift from monolithic databases to horizontally scalable transactional systems – covering billing, order management, and IoT data – during a period of hypergrowth.

By 2022, Bolt served over 100 million users across 500 cities. At the same time, the company had relied on MySQL as its backend database for years and was starting to run into challenges:

- managing hundreds of TBs to 1 PB in data volume;

- deploying more than 100 services every day;

- making more than 100 database changes every week;

- generating 5–10 TBs of new data every month;

- hitting the size limitation of a single MySQL instance.

By the time Bolt’s architecture reached the 8 TB limit of a single MySQL instance, the company faced several critical issues:

- backup and recovery operations took many hours;

- schema changes took up to two weeks, causing database freezes lasting several seconds during table drops;

- the MySQL master switch caused downtime during updates, resulting in poor connection handling during peak loads.

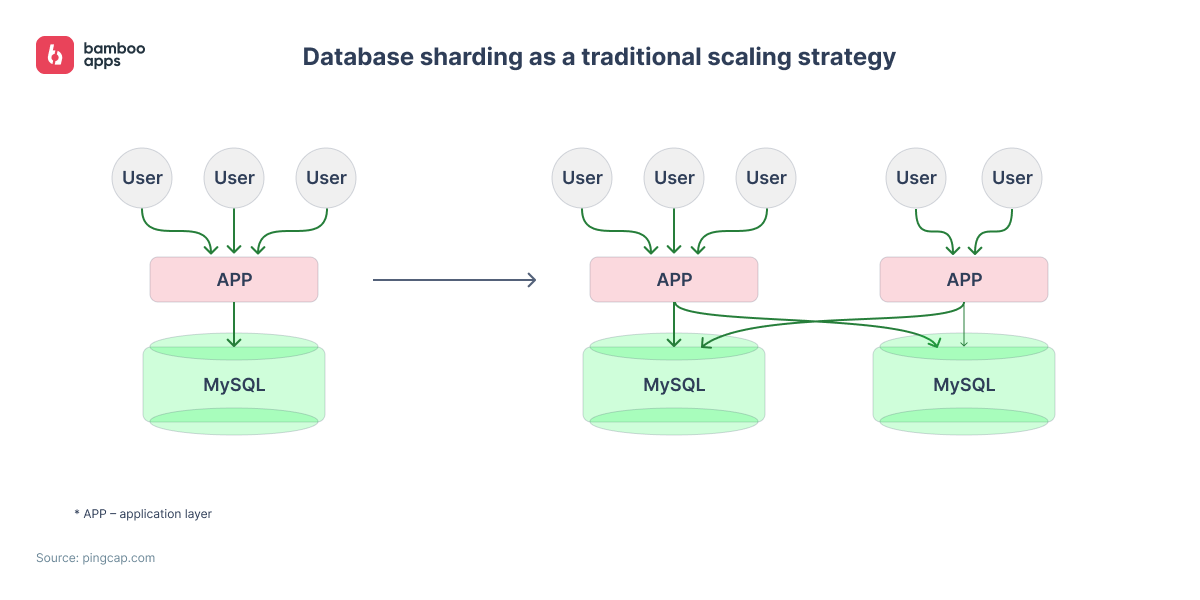

A more traditional approach to this problem would likely have involved internal database sharding. This would mean that supporting more connected users would require spinning up additional application servers. Several instances of the internal database would be created, and a large table, sharded into multiple subtables, would be distributed across them.

However, this approach would have introduced several challenges for the team, including:

- the need to rewrite large portions of the application code;

- loss of certain SQL functionality, particularly JOIN operations;

- increased complexity in splitting and redistributing data as the system scales;

- difficulties maintaining schema consistency across database segments;

- complications with failure recovery and creating consistent database snapshots during backups.

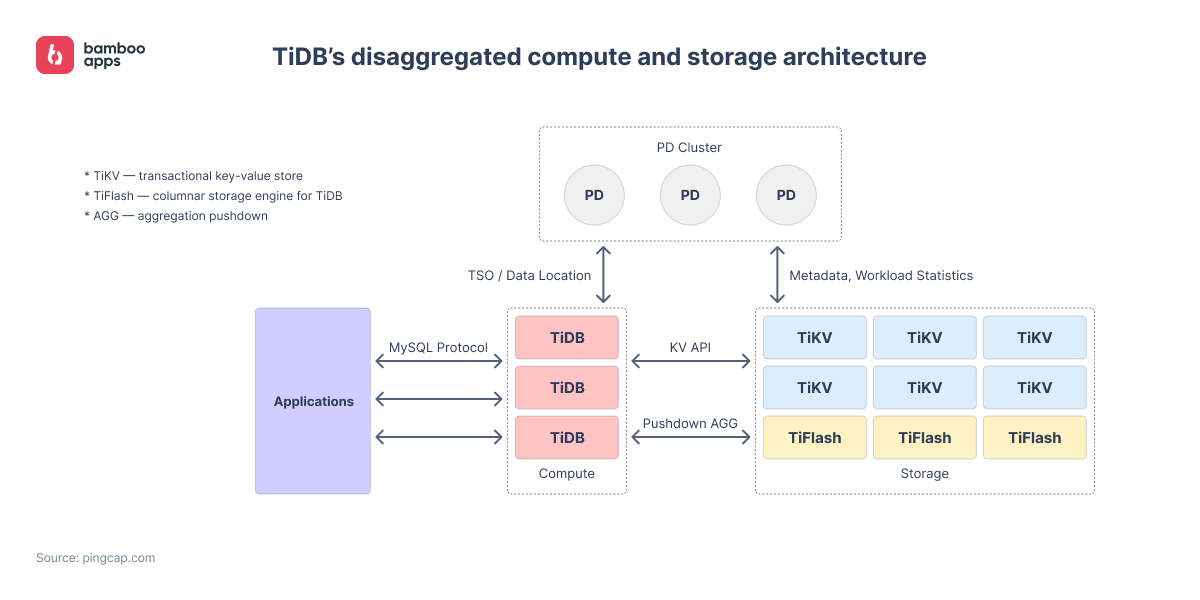

Instead, Bolt re-architected its system around TiDB – a horizontally scalable SQL database built for cloud-native environments and deployed on AWS and Kubernetes.

As an open-source database, TiDB supported online schema changes out of the box, ensuring that updates didn’t block high-priority queries. Each node handled a portion of the workload: it scanned the relevant data ranges, generated SST files, and uploaded them to storage. Meanwhile, new operations (e.g., inserts, updates, deletions) were continuously tracked and logged.

Overall, this solution allowed Bolt to:

- add more database nodes to handle growing data volumes and requests per second;

- avoid compromises in SQL syntax, changes to application-level code, or nonstandard schema modifications;

- reduce read/write latency at scale, averaging under 99 milliseconds even with hundreds of terabytes of data;

- achieve 99.99% uptime with resilience to failures at the availability zone (AZ) level.

As a result, the system handled over 35,000 queries per second (QPS), ensured strong data consistency, and scaled with zero downtime – all while supporting 400% average annual user growth.

Tier: modelling intelligent load balancing in multi-cluster service mesh environments

In microservice architectures spread across multiple geographic regions, routing traffic between service replicas can be challenging. Traditional methods like round-robin or Domain Name System (DNS)-based load balancing often fall short when dealing with network latency changes, server performance differences, or shifting traffic loads.

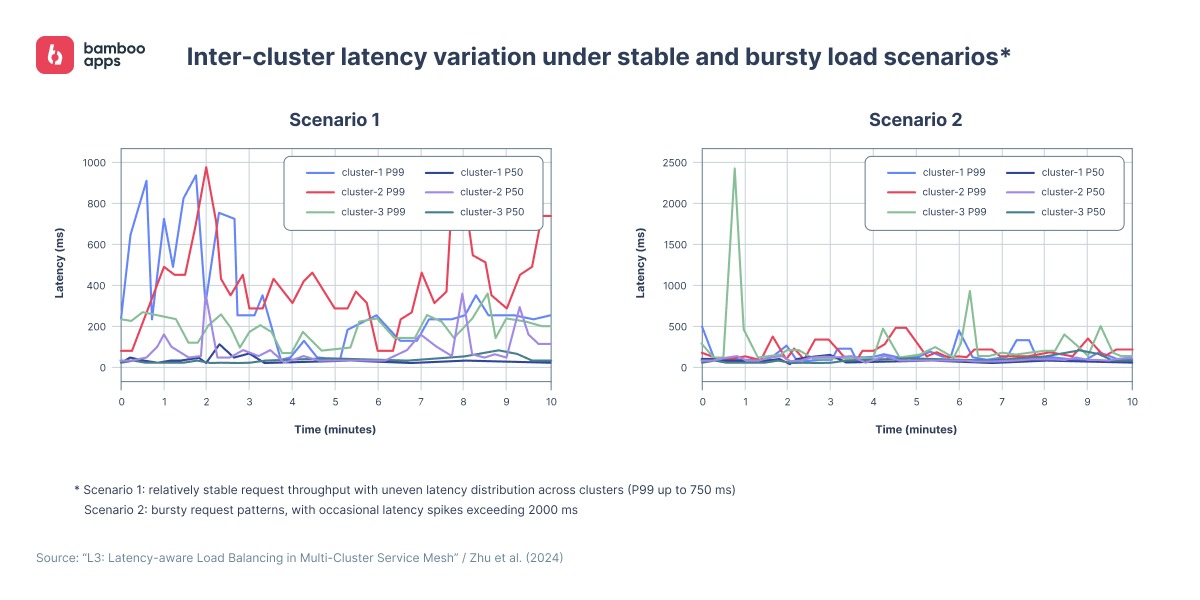

Tier Mobility, a global micromobility operator, faced a similar situation. Its backend had grown to over 200 microservices in production. The system was deployed in a multi-cluster architecture with geographically distributed locations to ensure high availability and proximity to users. However, as the infrastructure expanded, it exposed several challenges typical of distributed systems:

- high tail latency variability: 99% of requests (P99) completed within a wide range – from a few hundred milliseconds to over two seconds, depending on the scenario. Meanwhile, median latency (P50) stayed within just a few to a few dozen milliseconds;

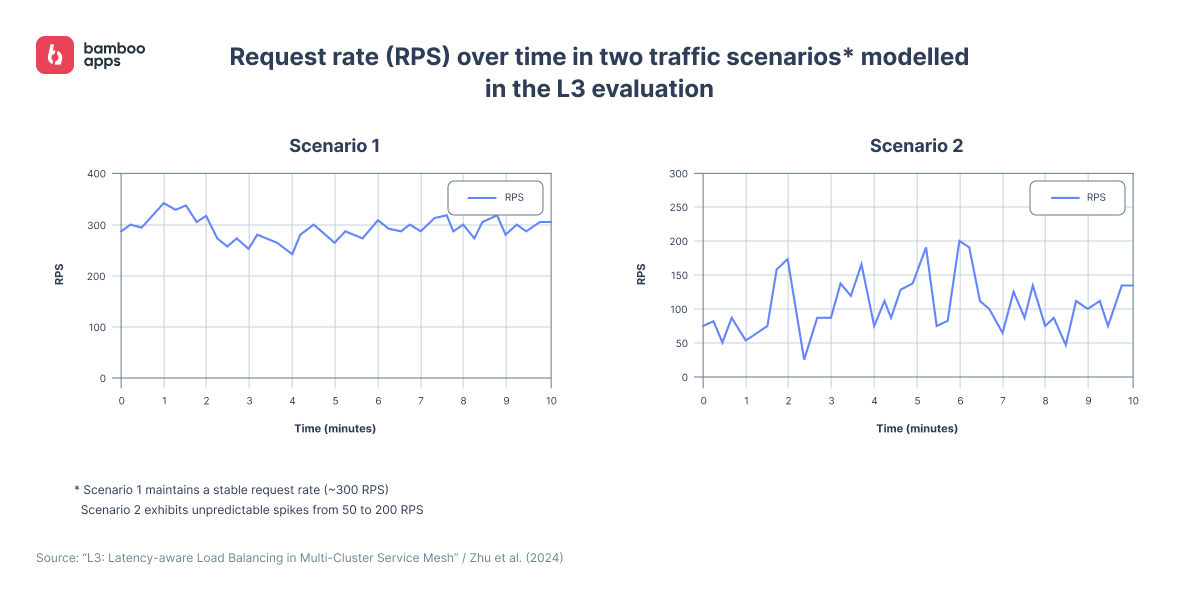

- unstable load patterns: some scenarios showed steady throughput in the hundreds of requests per second (RPS), while others experienced sudden spikes from a few dozen to over 200 RPS, making traffic peaks difficult to predict;

- WAN link variability: latency across wide-area network (WAN) connections changed over time, introducing unpredictability in inter-cluster communication;

- dynamic routing: routing paths between clusters could shift every few seconds, further amplifying latency variability;

- inter-cluster dependencies: services that relied on cross-cluster (e.g., cross-region) calls incurred additional latency in their request chains;

- unreliable geographic proximity: the geographically closest cluster didn’t always provide the lowest latency due to the combined effects of the factors above.

Researchers from the Technical University of Berlin and Eindhoven University of Technology proposed a latency-aware Layer 3 (L3) load balancing mechanism to address these challenges. Their findings show that intelligent traffic routing based on latency metrics can improve both user experience and system stability, without requiring major infrastructure changes.

To evaluate the effectiveness of this approach, the researchers built two representative test scenarios based on real traffic patterns observed in Tier Mobility’s production system. Tail latency and RPS were measured using live traffic from Tier’s production logs, collected over 10-minute intervals from three clusters. These patterns were then modelled and replayed in a controlled environment using the DeathStarBench microservice benchmark suite.

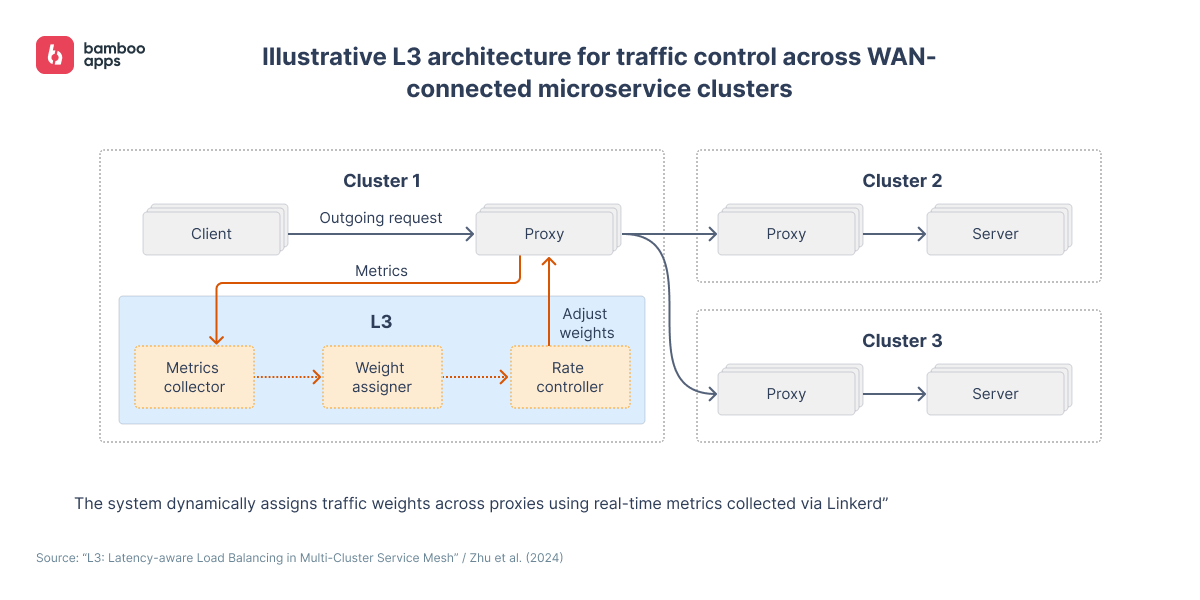

Based on the results, the mechanism operates as follows:

- L3 collects data on key metrics (tail latency, success rate, number of in-flight requests, and RPS) every five seconds via the Linkerd service mesh proxy and Prometheus;

- using these metrics, the mechanism dynamically adjusts traffic weights for microservice replicas across clusters. It updates the TrafficSplit settings in the Service Mesh Interface (SMI), sending more traffic to faster clusters and shifting load away from slower or overloaded nodes;

- to avoid metric loss, L3 uses a heuristic to preserve visibility into replica states. Even nodes with low weights continue to receive a minimal stream of requests;

- a built-in rate control algorithm helps absorb sudden RPS spikes and evenly spread the load, reducing the risk of overloading individual clusters during peak periods.

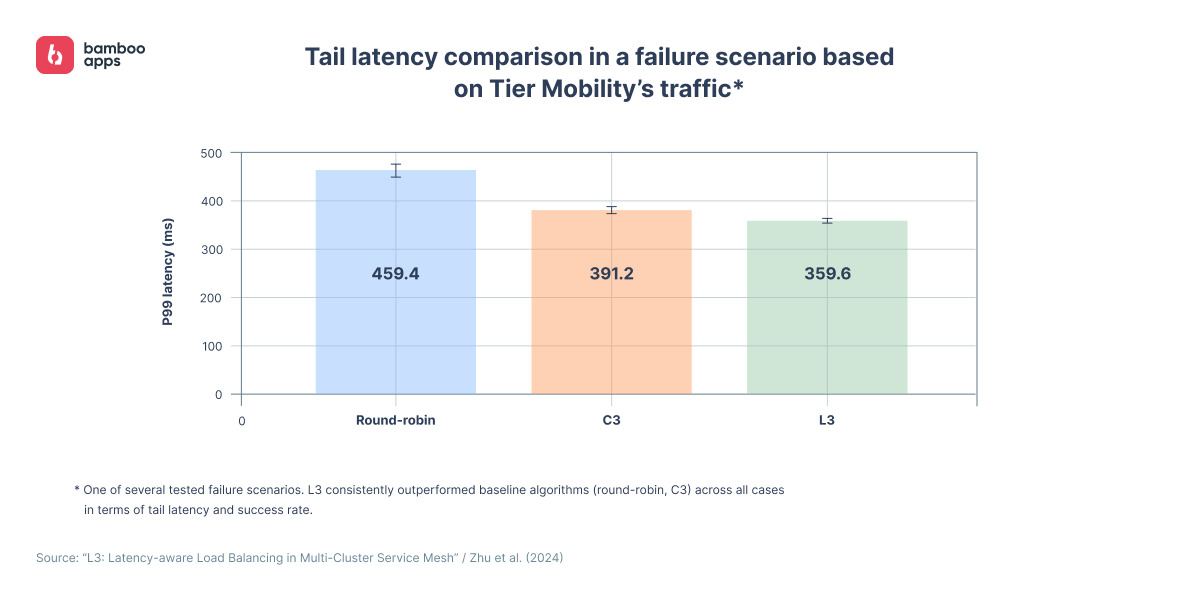

Among traditional algorithms, the researchers selected round-robin for its simplicity and C3 (Client-Controlled Congestion Control) for its adaptive replica selection capabilities. Both serve as baseline methods in latency-sensitive distributed systems.

To test how L3 performs under real-world stress, the researchers simulated a failure of one remote cluster – a common risk in multi-cluster deployments. This allowed them to assess the mechanism’s resilience to service degradation and sudden topology shifts.

Evaluation based on Tier Mobility’s traffic patterns and the DeathStarBench benchmark showed that L3 reduced tail latency by 22–26% on average compared to round-robin and the C3 algorithm. In failure scenarios, it also increased request success rates from 91.4% to 92.4% on average.

Thus, the researchers believe this approach can make Tier’s platform much more stable in highly distributed environments with unpredictable traffic patterns. The method was presented at ACM/IFIP Middleware 2024, one of the leading international conferences on distributed systems.

Why data sync issues kill trust in shared mobility platforms

Context

Let’s face it: it’s frustrating to walk a few blocks to a scooter that shows up in the app, only to find it broken. All because the app fell out of sync with the vehicle firmware and showed outdated data.

Worse yet is finally finding another scooter – only for it to die a kilometre later because the app got outdated battery data.

And the worst: the client is still being charged – due to a sync failure between the payment system and the vehicle controller.

Sure, this exact sequence of failures might be rare, but sometimes, just one of them is enough to lose a customer.

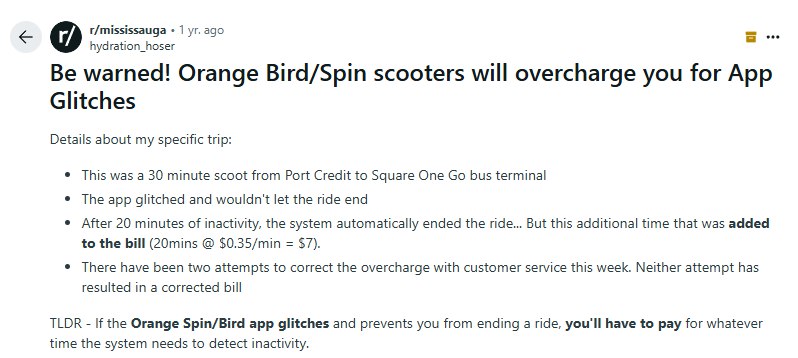

According to Anecdote, up to a quarter of Spin users regularly experience overcharges caused by unlock glitches and similar sync issues. These insights are based on user reviews from platforms like Reddit, which the aggregator analyses and compiles into reports for companies across various industries. Thus, in the shared mobility space, complaints most often target scooter sharing software…

and carsharing platforms – especially those used by drivers to earn income.

In some cases, posts and reviews attract media attention, creating a more serious threat to user trust in mobility operators.

These and other incidents are gradually building the case for outright scooter bans – as seen in Paris, where a city-wide referendum led to major restrictions in 2023. Since then, a clear trend has emerged: cities like Brussels, Rome, and Berlin are reducing fleet sizes, while regulations for shared mobility services continue to tighten across the EU.

In this environment, complying with safety, parking, and speed rules – and quickly addressing related software issues – is critical for any company looking to grow its scooter business, at least within Europe.

Handling data sync failures in practice

When building a custom platform, it’s essential to reduce the risk of data loss and failures across the app → backend → vehicle chain. This calls for an architecture built on several core principles, or layers of resilience.

Let’s take a closer look at these layers, based on recommendations from Maxim Leykin, CTO at Bamboo Apps, and the AWS Connected Mobility Lens – a best-practice framework for connected vehicle platforms.

1. Using lightweight and reliable IoT protocols

The first layer of resilience involves switching from heavy synchronous requests to lightweight IoT protocols with asynchronous communication. In unstable networks, MQTT or CoAP – and in some cases, AMQP – tend to perform best. These protocols support reliable, low-latency, two-way communication for telemetry and control commands.

At the same time, AWS recommends carefully matching the communication protocol to the specific use case. For example:

- HTTP/gRPC – for OTA updates;

- WebRTC or RTMP – for video streaming;

- SMS fallback – for waking up devices or executing simple commands.

Asynchronous pub/sub channels – like those using WebSockets or AWS IoT Core – prevent client requests from being blocked and help spread load more evenly during traffic spikes. This is especially important for quick actions like vehicle unlocks.

2. Data consistency and transaction management

In distributed systems, full real-time synchronisation is rarely feasible. That’s why platforms should be designed from the ground up with eventual consistency in mind. While implementation can be costly, Maxim Leykin recommends using the Saga pattern to handle critical transaction flows such as payment → unlock → ride start.

Maxim Leykin, PhD, Chief Technology Officer at Bamboo Apps:

“The Saga pattern breaks a large transaction into a series of smaller, independent local transactions. If any of them fails, compensating transactions are triggered to undo the changes made by the previously completed ones. This helps maintain data consistency across the system.

But the main limitation is that it’s primarily suited for microservice architectures. In monolithic systems, reversible database migrations and various versioning mechanisms are still commonly used instead.”

Beyond that, AWS recommends the following tools and practices:

- orchestrators to simplify step execution and compensation management;

- correlation IDs to track transactions across services;

- rollback safety principles to ensure that each local operation is fully reversible.

To speed up recovery, it’s helpful to implement runbooks that automate notification generation, incident isolation, and, when necessary, the blocking of suspicious devices via API – for example, through AWS IoT Core or an equivalent.

3. Adaptive caching and latency-tolerant design

For high-frequency data updates – such as location, pricing, and availability – it’s worth implementing multi-level caching: distributed caching, cache-aside, and write-through. In case of synchronisation failures, caching allows the application to operate in a degraded mode. However, the system must clearly define data expiration rules and trigger timely updates.

AWS also recommends adapting update frequency and prioritising critical data to filter out non-essential telemetry, reducing network load.

Maxim Leykin, PhD, Chief Technology Officer at Bamboo Apps:

“The most important thing is having a proper cache-handling strategy when working with multi-level caches – keeping data updated regularly and transparently for the user. It’s also essential to define and prioritise the set of operations that need to be available offline, including locking and unlocking the car, and starting or ending a trip.”

4. Event tracing, monitoring, and diagnostics

The next layer of resilience is end-to-end observability. Every action in the system – whether it’s an automatic charge or a manual unlock by an operator – should be logged with the actor ID, timestamp, and execution result. This makes it easier to investigate incidents and reproduce transactions in a test environment.

In its best practices guide, AWS also recommends using event tracing, automatic anomaly detection, and flexible incident escalation. Tools like CloudWatch Alarms and Lambda functions can instantly block suspicious operations or switch services to fallback mode. At the same time, critical incidents should be escalated to the admin panel with a clear status and context, so operators can quickly assess the situation and take informed action.

5. Clear operational SLAs and correction tools for the admin panel

The admin dashboard must deliver essential information without delay. To achieve this, start by defining the KPIs that matter most to operations, then build data update metrics and service-level agreements (SLAs) around them.

If real-time sync isn’t feasible, operators must have access to manual correction tools for updating trip statuses, account balances, or unlocking blocked devices.

6. Offline-first as a foundation for resilient user flows

Finally, the platform should be designed from the ground up to operate reliably in poor connectivity conditions, such as tunnels, underground parking, or remote areas. In these scenarios, critical actions like reserving a vehicle, unlocking, starting, and ending a trip must work even without a network connection, with state synchronisation occurring once connectivity is restored.

Maxim Leykin, PhD, Chief Technology Officer at Bamboo Apps:

“The offline-first strategy matters not just in areas with weak signal. It can also help reduce the amount of data sent over the network, which can strongly affect performance and, in some cases, billing.”

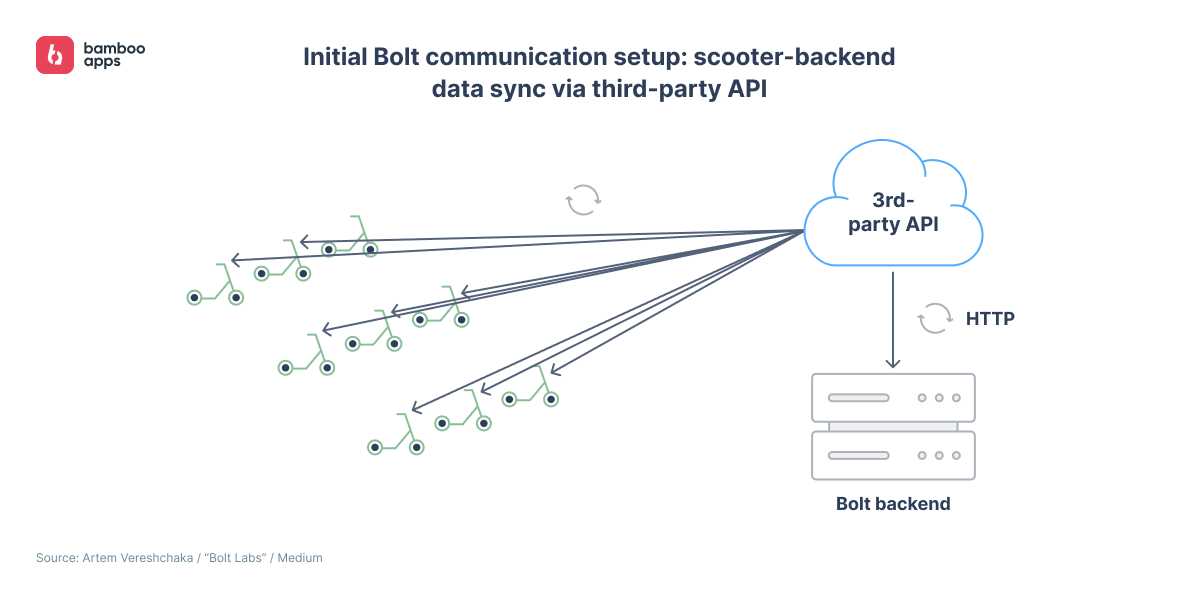

Bolt: scaling live IoT for scooter sharing software with third-party and proprietary APIs

In a second case study, the Estonian company shares how it solved latency and data loss by rethinking its early data exchange system.

In its early days, Bolt relied on a third-party API bundled with prebuilt IoT modules, using HTTPS and webhooks. This made it easy to launch the service quickly, but the limitations soon became clear: scooter status updates were delayed, some messages were lost, and the external API lacked the flexibility to scale the communication logic.

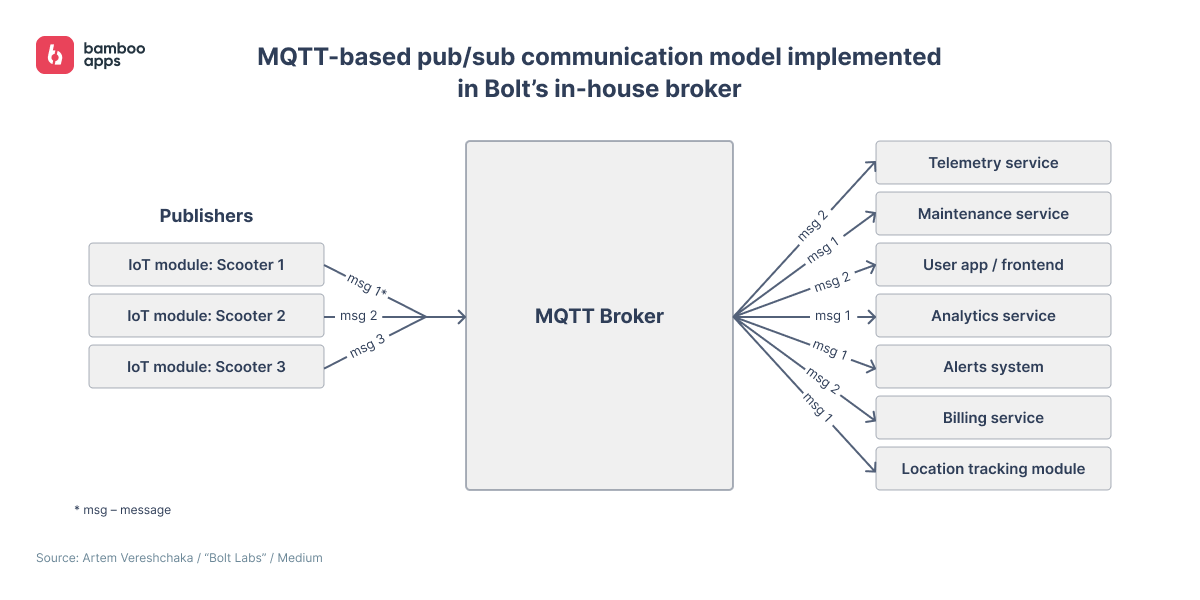

Eventually, the operator decided to develop its own IoT module and a more robust, MQTT-based data exchange infrastructure.

The author of the case study highlights how MQTT differs from traditional message queues, emphasising its core publish/subscribe model. Messages are published to dynamic topics that can have multiple subscribers at once, with support for wildcards to enable flexible routing. Unlike message queue systems that store messages until a single client consumes them, MQTT doesn’t retain messages by default – except for special types like retained messages or last will messages. This meant the team had to plan for the fact that offline clients might miss certain messages.

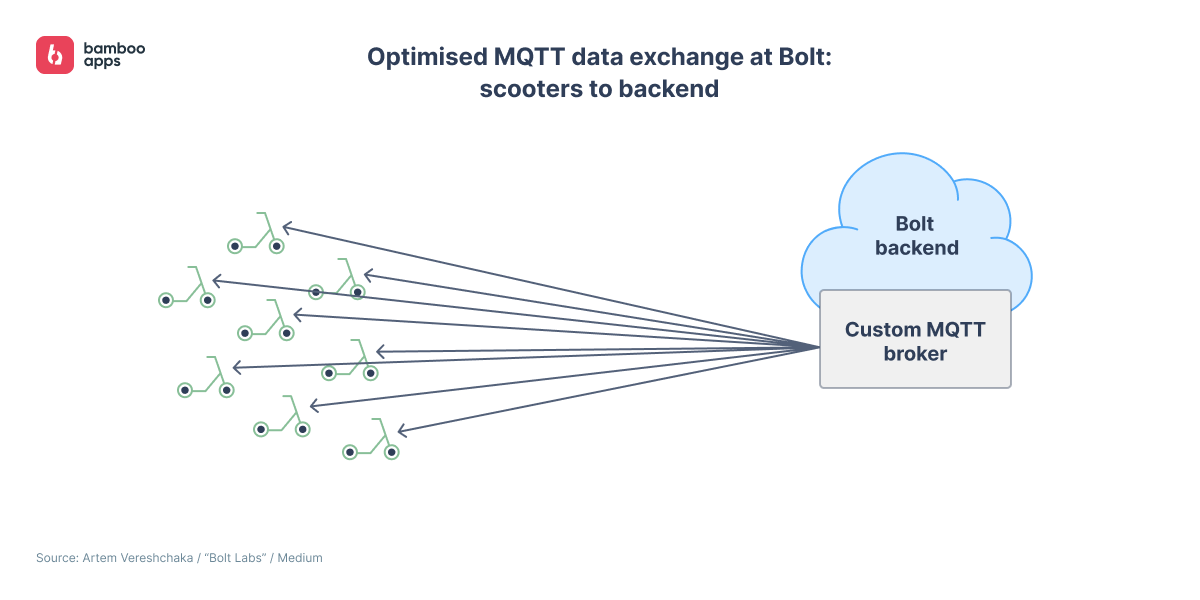

This behaviour shaped the way Bolt implemented its communication layer. To build a reliable MQTT-based infrastructure, Bolt developed its own broker. It started with prototypes based on Eclipse Mosquitto, then switched to the more flexible Aedes, which could be embedded directly into its Node.js services.

This approach allowed Bolt to extend the broker’s capabilities to fit its own needs: from advanced client authentication to fine-grained monitoring and scaling controls. A Redis-based persistence layer ensured synchronisation of persistent sessions, retained messages, and quality of service (QoS) messages across multiple brokers. In the final architecture, brokers were load-balanced over TCP using the AWS Network Load Balancer (NLB).

Switching from a third-party to a fully custom data exchange solution helped Bolt reduce latency in telemetry and commands, cut message loss, and take full control of the communication layer – without being limited by an external API. This was a key step in scaling the platform.

Scooter Rental: optimising nighttime data exchange and handling forced trip termination

On its website, Diversido briefly outlines the development of the Scooter Rental app for a shared motor scooter operator. The description is concise, but still highlights a few noteworthy technical aspects.

The forced trip termination feature is especially important for motor scooters: they’re more likely than e-scooters to be left in underground parking, where the signal is weak. It works offline using local data and Bluetooth Low Energy (BLE), with automatic server sync once the connection is restored.

To save energy and reduce network usage, the team implemented a sleep mode for scooters. It can be activated from the admin panel and automatically lowers the update frequency to 1–2 minutes, for example, during nighttime hours.

The tech stack used in the project included:

- Xamarin.Forms for building native apps on iOS and Android;

- Ruby on Rails 6.0.3 for backend logic;

- ActiveAdmin 2.9.0 for the web-based admin panel;

- nRF9160 chip for secure IoT connectivity and processing.

The team later migrated the apps from Xamarin.Forms to .NET MAUI due to end-of-life support.

The approaches outlined in this section help reduce common synchronisation problems in smart mobility platforms, like delayed status updates, failed payments, or unlock issues caused by data transfer errors.

In the next part, we’ll explore how some of the principles from above, including offline-first, translate into UX design practices.

Hitting the limits of your shared mobility software?

Contact us to discuss how we can help you re-architect for scale – with resilience, speed, and controlled expansion