For years, the car screen acted as a mirror for the smartphone. Platforms such as Apple CarPlay or Android Auto projected selected mobile apps onto the dashboard. While the phone handled the logic, the car display served as a controlled interface with strict design limits.

That model still works for many vehicles. Yet, the automotive industry has started to move in a different direction, which is native HMI app development. Carmakers now build full operating systems inside the vehicle, and these systems run apps directly in the car without phone dependency.

Our team at Bamboo Apps knows how HMI apps reach the car dashboard today. Our goal is to show you the trends in HMI development and answer one burning question – does the car dashboard require a light extension of the mobile product or a separate engineering track?

Why the car dashboard is the new strategic frontier

It is possible to track the changing dynamics of automotive development through HMI market numbers. In 2025, the global automotive human machine interface market size already reached USD 27.03 billion – and the number is expected to grow to USD 29.54 billion in 2026.

A large part of the change comes from the operating systems that now run directly inside vehicles. One of such examples is Android Automotive OS (or AAOS). Unlike Android Auto, which relies on the smartphone, AAOS runs inside the vehicle hardware itself. Developers publish apps that live on the car system rather than on the phone. As a result, drivers open navigation or media services straight from the dashboard, with no mobile device required.

This new approach to HMI programming attracts major consumer apps, too. For example, Spotify already runs as a native in-vehicle app across several AAOS-powered vehicles, including Polestar 2 and 3, Volvo XC60 with Google built-in, and GM EVs like Blazer EV. Users even report that the AAOS version of Spotify has more functionality compared to the regular free-tier mobile application.

Native HMI applications also open access to something that mirrored apps never touched – vehicle telemetry. A car emits a constant stream of signals, like its speed, battery state, charging status, and range. With the proper permissions, an app can react to these signals in real time. A music service, for instance, might raise the volume to compensate for road noise. In a similar way, a charging app can pull battery data directly from the vehicle itself, then use that information to suggest nearby stations before the battery runs low.

The 2026 HMI technology stack

A car dashboard runs a very different software stack from a phone. Its environment functions more like an embedded system, and it is defined by strict UX rules and hardware limitations.

Android Automotive (AAOS), QNX, and proprietary Linux stacks

In 2026, three platform families dominate vehicle HMI systems. Each one leads to a different development path.

Android Automotive OS naturally appeals to mobile teams, as engineers can reuse parts of an existing Android app for HMI software development. There is a catch, though, as the in-car environment imposes strict design rules for navigation and media, as well as for voice controls. Product teams cannot simply transfer their mobile interface, so designers rebuild the UI using automotive-specific libraries like Car UI Library.

Let us say you are building an AAOS podcast app. It might retain its core playback code from the mobile version. The in-dash interface, however, must follow the Android Automotive media template. That template dictates the following:

- the layout

- how drivers interact with it

- how deeply they can browse content.

QNX-based systems appear across premium brands like Audi. This platform runs on a microkernel architecture built for safety-critical electronics. To develop HMI apps for QNX, one usually utilises C++, Qt, or proprietary UI frameworks provided by the vehicle manufacturer.

The QNX environment rarely accepts direct ports from consumer mobile apps. Instead, teams build a new interface layer connected to the existing product backend.

Custom Linux stacks appear in many mid-range vehicle platforms – such as infotainment systems in Toyota and Subaru. Manufacturers assemble their own frameworks on top of embedded Linux. They combine Wayland-based graphics stacks with proprietary UI frameworks.

In this case, the development model varies by OEM. Some provide SDKs for third-party partners. Others build the interface internally, then connect external services through APIs.

Middleware and HAL (Hardware Abstraction Layers)

Middleware handles communication between the HMI and vehicle services, such as navigation data or media playback. Automotive middleware frameworks typically depend on message buses or service-oriented architectures.

For example, an EV charging app requests battery state data through a vehicle API. Middleware receives this request and translates it into messages that the battery management system understands.

Beneath this lies the Hardware Abstraction Layer (HAL). Its modules convert software commands into instructions for hardware components. Display controllers, audio chips, GPS receivers, and vehicle sensors all depend on these adapters. Without the HAL, every application would need separate integration for each hardware variation.

Even a basic function like playing audio can pass through several layers before reaching the car’s speakers:

- The app starts the media framework

- Middleware directs the request

- HAL modules talk to the audio controller.

Over-the-Air (OTA) software updates

Once, vehicle software updates required a trip to the service center. However, connected vehicles have made that model obsolete.

Manufacturers now distribute over-the-air (OTA) packages that update dashboard displays and fix software bugs, as well as release new features. As a result, HMI applications now reach production through a different release process.

Automotive OTA pipelines operate under tighter constraints than mobile app stores, since a flawed update could disable the infotainment system across an entire vehicle line. To prevent this, carmakers release updates in staged packages that include rollback capabilities.

When an HMI feature update arrives as part of a system image upgrade, the manufacturer first validates its compatibility with the vehicle firmware before distribution.

On AAOS, app updates may go through a specialised Play Store channel for cars. Even then, manufacturers maintain strict certification control.

“Right now, the HMI stack in cars still feels too fragmented, and it slows the whole industry down. I expect that to change in the next few years. In my vision, Android Automotive OS will take a leading role in HMI development. More shared standards across OEMs will become available. At the same time, engineers need to understand system-level orchestration, namely how hypervisors like QNX isolate mixed-criticality apps, how containerised workloads run inside the vehicle, and how failures stay contained. A simple example explains why it matters: a Spotify crash must never affect something like the speedometer or other critical vehicle data.”

Maxim Leykin, Chief Technology Officer at Bamboo Apps

From ‘tap-first’ to ‘safety-first’: the nuances of HMI apps development

Mobile UI adaptation

Native HMI apps follow a different logic than mobile ones right from the start. While mobile patterns may serve as a reference, they rarely transfer without major changes to interaction design.

Designers apply the ‘2-second rule’ to determine how each screen functions. A driver needs to comprehend information almost instantly, but phone interfaces often encourage exploration and comparison. But in a vehicle, even a brief distraction introduces risk. Consequently, every screen must focus on fast recognition rather than exploration.

Information architecture reflects this reality. In most HMI applications, primary screens show only what supports the immediate task, while details stay hidden until the context allows access. Visual hierarchy becomes stricter, and touch targets grow larger. Why? Because a layout that feels balanced on a phone can overwhelm a driver on a dashboard.

Vehicle state adds another layer of logic. When the gear shifts to Drive, the interface pulls back – browsing flows disappear, and rich metadata gives way to essentials. A media app, for example, might present only playback controls or a short list of content. Once the vehicle enters Park, the interface can expand again to offer search or content discovery.

There is another challenge that comes with display formats, too. In-vehicle screens vary in width, sometimes split into multiple zones. Mobile layouts rarely account for such conditions, but native HMI apps have to address these differences from the ground up, rather than resizing existing screens.

“Contemporary cars are already quite complex, so we as software developers do not need to complicate things further to the users. As the user’s safety while driving is the most important consideration, a straightforward UI becomes the main factor for guaranteeing that the driver can not be distracted by the app during the trip. It is harder to design and implement such UIs consistently across various screen sizes that we have now across various vehicles, but it is a price worth paying.”

Andrei Mukamolau, Android Developer at Bamboo Apps

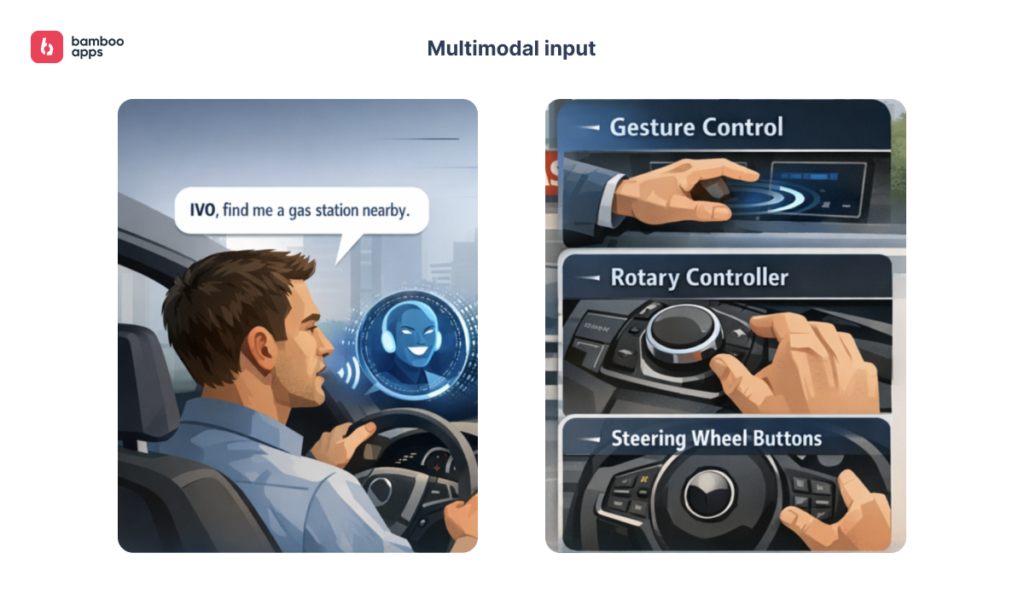

Multimodal input

In HMI software development, voice takes priority. A driver cannot focus on a screen for more than a glance, so every interaction must compete with the road for attention. Drivers expect to speak naturally, without memorising rigid commands. But a simple command‑and‑response model rarely survives real driving conditions. Background noise and fragmented speech all come into play. Consequently, voice design moves closer to conversation design. Systems need intent recognition that adapts in real time, plus fallback options that do not frustrate someone mid‑drive.

At Bamboo Apps, we promote voice control through purpose-built solutions like IVO. Its voice assistant offers drivers helpful tips about their cars’ features and reacts when the system notices something is wrong, like if there is engine trouble or a low-pressure tire. In addition, IVO analyzes driver and vehicle behaviour, as well as surroundings, and voices its suggestions for parking, refueling, and more.

Voice does not work alone, however. Carmakers combine it with other input methods that depend on the vehicle segment. Gesture control appears in some models, though adoption varies. For example, in 2025, BMW discontinued this feature. More importantly, physical interaction remains relevant. In premium vehicles running QNX‑based systems, users often rely on rotary controllers or steering wheel buttons.

‘Automotive-grade’ backend for optimised performance

Network conditions in a vehicle change by the second. Drivers go into a tunnel, and the signal disappears. Or they emerge onto a country road, and latency climbs. When the backend handles every request the same way, the application starts to feel unstable – navigation can pause mid-route or a payment can fail with no explanation.

HMI apps avoid these problems with a strategy called ‘dead-zone logic’. With this approach, the system anticipates areas with weak or no connectivity and prepares ahead of time. As an illustration, a navigation app can download upcoming route data before the car enters a tunnel. Or similarly, a parking app stores session information locally, letting the driver check their active status offline. When the network returns, the backend syncs everything in the background.

The rhythm of communication between frontend and backend also differs. On a phone, polling every few seconds rarely causes issues. In a vehicle, that same behaviour can overwhelm both the network and the head unit. Grouping requests based on the driving situation helps. The system sends updates in controlled batches, which keeps the interface responsive and reduces strain on the hardware.

Latency-sensitive functions like voice commands or in-car payments need another approach. These flows cannot wait for long trips to a remote server. Thus, HMI app development teams often move parts of the logic closer to the vehicle, sometimes directly onto the head unit, to cut dependence on distant infrastructure and deliver faster responses.

Access to vehicle telemetry

Telemetry turns HMI applications into something context-aware, responsive to surroundings and vehicle activity.

On Android Automotive OS, apps read real-time signals – speed, gear status, battery, range – through the Car API. Data flows directly from the vehicle and allows instant reactions to changing conditions. On BlackBerry QNX, the Message Center Protocol (MCP) fills the same role and bridges the vehicle and application layers with low-latency data.

Because of this foundation, features that go far beyond a mirrored mobile experience become possible. Here are some ideas:

- In a navigation app, reaching a 10% battery state automatically adds charging stops along the route

- A music app switches to voice-only controls when speed exceeds 15 mph

- A fintech application could detect when the car enters a toll zone, then present a one-tap payment option on the screen.

Security requirements and distraction limits

Car manufacturers do not treat a dashboard like an open platform for HMI apps. Before any feature reaches the driver, it must pass through a series of safety and compliance reviews.

At the core of in-car experience design sit Driver Distraction Guidelines (DDG). These rules cap the time a driver may look away from the road, as well as restrict content shown while the vehicle is moving. A messaging feature that works naturally on a phone may, when moved to the dashboard, require voice-only interaction or lock completely during motion.

Cybersecurity regulations add another set of regulations. Frameworks such as UN R155 and R156 mandate protection throughout a vehicle’s life, including software updates. An app that connects to cloud services or holds user accounts must conform to the car’s security architecture. This means secure communication, controlled update paths, and tight boundaries between internal systems.

Furthermore, there is the case of data privacy. Vehicles gather sensitive data, like location traces and user profiles. Regional laws, including GDPR, dictate how this information may be stored or shared. A feature that offers personalised suggestions may demand new data flows to stay within legal boundaries or OEM policies.

How Bamboo Apps brings mobile thinking to automotive reality

Bamboo Apps treats HMI applications as a separate product rather than an extension of mobile. Such an approach helps our teams avoid expensive rewrites later and create experiences that feel natural on the road, rather than compressed versions of a phone app.

With over ten years of experience in automotive HMI app development, we partner with leading OEMs to deliver intuitive in-vehicle interfaces. Our teams base their work in user-centered design and solid engineering practices, as mentioned above. Additionally, we follow ISO 26262 and Automotive SPICE (ASPICE) to build safe and reliable automotive software.

Validating software in real-life conditions is also one of our priorities. Bamboo Apps uses emulators from Polestar, Stapp Automotive, and TomTom to identify issues early, long before the app goes into production.

So, if you are looking for an experienced tech partner or consultant with real-life expertise in both HMI development and industry regulations, feel free to contact our team.